Running Microservices with Dapr on Azure Container Apps

This week I spoke on Dapr at the Techorama Netherlands conference. For the talk I took my GloboTicket Dapr demo app from my Dapr Fundamentals Pluralsight course and demonstrated it running on the new Azure Container Apps service.

In this post I'll run through the steps I took to get this working (mostly found in this PowerShell script), including some workarounds for a few limitations. The approach I took was mostly based around the Azure CLI, as this was just a proof of concept. For production applications a better approach would be to use Bicep templates.

Step 1 - Create a container app environment

The first step is to create a container app environment (we'll also create a resource group).

az provider register --namespace Microsoft.App

$RESOURCE_GROUP = "globoticket-containerapps"

$LOCATION = "westeurope"

$CONTAINERAPPS_ENVIRONMENT="globoticket-abc"

# create the resource group

az group create -n $RESOURCE_GROUP -l $LOCATION

# create the container apps environment (will auto-generate a log analytics workspace for us)

az containerapp env create `

--name $CONTAINERAPPS_ENVIRONMENT `

--resource-group $RESOURCE_GROUP `

--location $LOCATION

Step 2 - Create the resources for the Dapr pub/sub and state store components

For this demo, we are using Azure Blob Storage for the Dapr state store component and Azure Service Bus for the Dapr pub/sub component.

Here's the code I used to create them and harvest the connection strings which we'll need later.

Note: you will need to provide your own unique names for these resources if you try to run this in Azure.

# provide a unique name here

$STORAGE_ACCOUNT = "globoticketstate456"

# create the storage account

az storage account create -n $STORAGE_ACCOUNT -g $RESOURCE_GROUP `

-l $LOCATION --sku Standard_LRS

# get the connection string

$STORAGE_CONNECTION_STRING = az storage account show-connection-string `

-n $STORAGE_ACCOUNT -g $RESOURCE_GROUP --query connectionString -o tsv

# get the storage account key

$STORAGE_ACCOUNT_KEY = az storage account keys list -g $RESOURCE_GROUP `

-n $STORAGE_ACCOUNT --query [0].value -o tsv

# set an environment variable used to create the container:

$env:AZURE_STORAGE_CONNECTION_STRING = $STORAGE_CONNECTION_STRING

# create the container for state store

az storage container create -n "statestore" --public-access off

# you need to pick a unique name

$SERVICE_BUS = "globoticketpubsub456"

# create the service bus namespace

az servicebus namespace create -g $RESOURCE_GROUP `

-n $SERVICE_BUS -l $LOCATION --sku Standard

# get the connection string

$SERVICE_BUS_CONNECTION_STRING = az servicebus namespace authorization-rule keys list `

-g $RESOURCE_GROUP --namespace-name $SERVICE_BUS `

-n RootManageSharedAccessKey `

--query primaryConnectionString `

--output tsv

Step 3 - Run Maildev using Azure Container Instances

My GloboTicket Dapr demo app uses the maildev test email server which is a single container that combines an SMTP server and a web UI that displays the emails received.

I tried running this on Azure Container Apps, but unfortunately couldn't get it working because there doesn't seem to be a way to expose two ports (see this issue for more info).

However, it can be very easily hosted using Azure Container Instances, so here we create a maildev instance using ACI.

# we are going to use Azure container instances for the

$MAILDEV_CONTAINER_INSTANCE_NAME = "aci-maildev-abc"

$MAILDEV_DNS = "globoticketmaildev"

az container create -g $RESOURCE_GROUP -n $MAILDEV_CONTAINER_INSTANCE_NAME `

--image maildev/maildev `

--ports 1080 1025 --dns-name-label $MAILDEV_DNS

$MAILDEV_SERVER = az container show `

-g $RESOURCE_GROUP -n $MAILDEV_CONTAINER_INSTANCE_NAME `

--query ipAddress.fqdn -o tsv

Step 4 - Define the Dapr Components

Dapr components are defined in YAML, and the flavour for Azure Container Apps is slightly different from that used for self-hosted or AKS, so in my demo app I created a separate folder for the ACA components.

The biggest limitation is that at the time of writing there seems to be no way to reference a secret from within these component definitions. I hope this will be rectified soon (there are various issues on the GitHub repo about secret support), but for now, to avoid checking secrets into source control, I added placeholders to my yaml files, and then created temporary files with the secrets in for actual deployment.

This is the pub sub component YAML definition which uses Azure Service Bus:

componentType: pubsub.azure.servicebus

version: v1

metadata:

- name: connectionString

secretRef: service-bus-connection-string

secrets:

- name: service-bus-connection-string

value: "<SERVICE_BUS_CONNECTION_STRING>"

And the state store using Azure Blob Storage:

componentType: state.azure.blobstorage

version: v1

metadata:

- name: accountName

value: <STORAGE_ACCOUNT_NAME>

- name: accountKey

secretRef: storage-account-key

- name: containerName

value: statestore

secrets:

- name: storage-account-key

value: "<STORAGE_ACCOUNT_KEY>"

scopes:

- frontend

The scheduled task binding is nice and simple:

componentType: bindings.cron

version: v1

metadata:

- name: schedule

value: "@every 5m"

And the email server also has a placeholder that we'll fill in before deploying for the server. Obviously we probably should take this further and override the default maildev username and password for better security (even though this mail server is just for demo purposes).

componentType: bindings.smtp

version: v1

metadata:

- name: host

value: <MAILDEV_SERVER>

- name: port

value: 1025

- name: user

value: "_username"

- name: password

value: "_password"

- name: skipTLSVerify

value: true

scopes:

- ordering

And here's the PowerShell to create temporary component definition files that replace the placeholder values above:

(Get-Content -Path "$COMPONENTS_FOLDER/pubsub.yaml" -Raw).Replace('<SERVICE_BUS_CONNECTION_STRING>',$SERVICE_BUS_CONNECTION_STRING) | Set-Content -Path "$COMPONENTS_FOLDER/pubsub.tmp.yaml" -NoNewline

(Get-Content -Path "$COMPONENTS_FOLDER/statestore.yaml" -Raw).Replace('<STORAGE_ACCOUNT_KEY>',$STORAGE_ACCOUNT_KEY).Replace('<STORAGE_ACCOUNT_NAME>',$STORAGE_ACCOUNT) | Set-Content -Path "$COMPONENTS_FOLDER/statestore.tmp.yaml" -NoNewline

(Get-Content -Path "$COMPONENTS_FOLDER/sendmail.yaml" -Raw).Replace('<MAILDEV_SERVER>',$MAILDEV_SERVER).Replace('<STORAGE_ACCOUNT_NAME>',$STORAGE_ACCOUNT) | Set-Content -Path "$COMPONENTS_FOLDER/sendmail.tmp.yaml" -NoNewline

Step 5 - Deploy the Dapr components

Now we need to register our Dapr components with our container apps environment, which we can do with the az containerapp env dapr-component set command. Note that the name of the component is provided as an argument rather than specified in the YAML. Also note that we're using the temporary YAML files that actually contain the secret values needed for connection.

az containerapp env dapr-component set `

--name $CONTAINERAPPS_ENVIRONMENT --resource-group $RESOURCE_GROUP `

--dapr-component-name pubsub `

--yaml "$COMPONENTS_FOLDER/pubsub.tmp.yaml"

az containerapp env dapr-component set `

--name $CONTAINERAPPS_ENVIRONMENT --resource-group $RESOURCE_GROUP `

--dapr-component-name shopstate `

--yaml "$COMPONENTS_FOLDER/statestore.tmp.yaml"

az containerapp env dapr-component set `

--name $CONTAINERAPPS_ENVIRONMENT --resource-group $RESOURCE_GROUP `

--dapr-component-name sendmail `

--yaml "$COMPONENTS_FOLDER/sendmail.tmp.yaml"

az containerapp env dapr-component set `

--name $CONTAINERAPPS_ENVIRONMENT --resource-group $RESOURCE_GROUP `

--dapr-component-name scheduled `

--yaml "$COMPONENTS_FOLDER/scheduled.yaml"

Step 6 - Deploy the microservices to Azure Container Apps

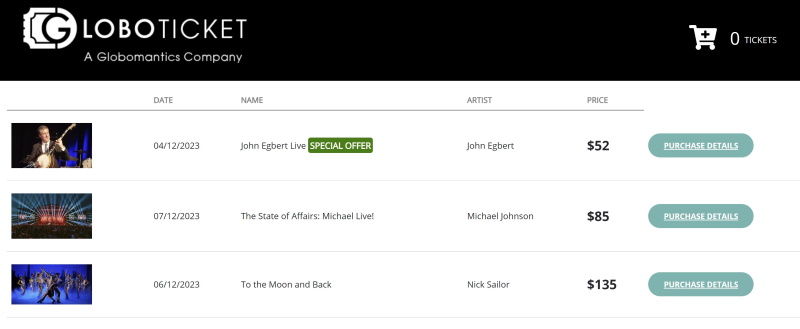

Now we're ready to deploy the three microservices that make up the GloboTicket demo app which we can do with az containerapp create.

The first is the frontend microserivce. Note that this has an "external" ingress configured, and we're enabling Dapr, and making sure we tell Dapr the name of the service and the port it's listening on.

The container image is just using a build of the microservice that I have in Docker Hub, but of course in production we'd probably use an Azure Container Registry to store our images.

az containerapp create `

--name frontend `

--resource-group $RESOURCE_GROUP `

--environment $CONTAINERAPPS_ENVIRONMENT `

--image markheath/globoticket-dapr-frontend `

--target-port 80 `

--ingress 'external' `

--min-replicas 1 `

--max-replicas 1 `

--enable-dapr `

--dapr-app-port 80 `

--dapr-app-id frontend

The catalog microservice is similar, but the ingress is internal, because we do not want to expose this microservice to the internet.

Note that the environment variables and secrets I've configured are not actually working at the moment to retrieve secrets - this is something we're still waiting on Azure Container Apps to have better support for. But the catalog microservice doesn't actually need those secrets since the database is actually in-memory for this demo.

az containerapp create `

--name catalog `

--resource-group $RESOURCE_GROUP `

--environment $CONTAINERAPPS_ENVIRONMENT `

--image markheath/globoticket-dapr-catalog `

--target-port 80 `

--ingress 'internal' `

--min-replicas 1 `

--max-replicas 1 `

--enable-dapr `

--dapr-app-port 80 `

--dapr-app-id catalog `

--env-vars SECRET_STORE_NAME="Kubernetes" eventcatalogdb="DBConnectionStringFromEnvVar" `

--secrets "eventcatalogdb=EventCatalogConnectionStringFromContainerApps"

And the final ordering microservice is similar to the others...

az containerapp create `

--name ordering `

--resource-group $RESOURCE_GROUP `

--environment $CONTAINERAPPS_ENVIRONMENT `

--image markheath/globoticket-dapr-ordering `

--target-port 80 `

--ingress 'internal' `

--min-replicas 1 `

--max-replicas 1 `

--enable-dapr `

--dapr-app-port 80 `

--dapr-app-id ordering

Step 7 - Testing the application

Now we've finally got all the microservices published, it's time to test. One really nice thing about Azure Container Apps is that for the services external ingress, SSL certificates are automatically configured allowing us to access the site using HTTPS. Here's how to find out the randomly assigned DNS name for the frontend microservice, and view it in a browser:

$FQDN = az containerapp show --name frontend --resource-group $RESOURCE_GROUP `

--query properties.configuration.ingress.fqdn -o tsv

# launch frontend in a browser

Start-Process "https://$FQDN"

We can also use the Azure CLI to view the logs for any of the microservices if we want:

az containerapp logs show -n frontend -g $RESOURCE_GROUP

az containerapp logs show -n catalog -g $RESOURCE_GROUP

az containerapp logs show -n ordering -g $RESOURCE_GROUP

And if we want to view the maildev server that we hosted in Azure Container Instances to check that our order emails are getting sent, we can do so with:

Start-Process "http://$($MAILDEV_SERVER):1080"

Summary

Azure Container Apps is a really exciting new service that promises to make microservices hosting much simpler to get started with compared with deploying to a regular Kubernetes cluster. It's great that Dapr support is already included, although there are a few improvements needed, particularly around secret management.

A place for me to share what I'm learning: Azure, .NET, Docker, audio, microservices, and more...

A place for me to share what I'm learning: Azure, .NET, Docker, audio, microservices, and more...