Working with Linux and Windows Containers simultaneously on Docker Desktop

With Docker Desktop, developers using Windows 10 can not only run Windows containers, but also Linux containers.

Windows and Linux container modes

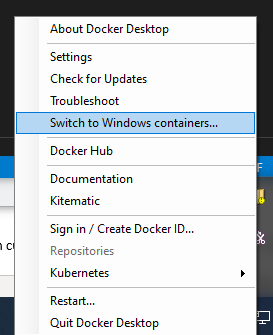

The way this works is that Docker for Desktop has two modes that you can switch between: Windows containers, and Linux containers. To switch, you use the right-click context menu in the system tray:

When you're in Linux containers mode, behind the scens, your Linux containers are running in a Linux VM. However, that is set to change in the future thanks to Linux Containers on Windows (LCOW), which is currently an "experimental" feature of Docker Desktop. And the upcoming Windows Subsystem for Linux 2 (WSL2) also promises to make this even better.

But in this article, I'm using Docker Desktop without these experimental features enabled, so I'll need to switch between Windows and Linux modes.

Mixed container types

What happens if you have a microservices application that needs to use a mixture of Windows and Linux containers? This is often necessary when you have legacy services that can only run on Windows, but at the same time you want to benefit from the smaller size and lower resource requirements of Linux containers when creating new services.

We're starting to see much better support for mixing Windows and Linux containers in the cloud. For example, Azure Kubernetes Service AKS, allows multiple node pools allowing you to add a Windows Server node pool to your cluster.

But if you create an application using a mix of Windows and Linux container types, is it possible to run it locally with Docker Desktop?

The answer is, yes you can. When you switch modes in Docker for Desktop, any running containers continue to run. So it's quite possible to have both Windows and Linux containers running locally simultaneously.

Testing it out

To test this out, I created a very simple ASP.NET Core web application. This makes it easy for me to build both Linux and Windows versions of the same application. The web application displays a message showing what operating system the container is running in, and then makes a request to an API on the other container, allowing me to prove that both the Linux and Windows containers are able to talk to each other.

I created the app with dotnet new webapp, which uses Razor pages, and added a simple Dockerfile:

FROM mcr.microsoft.com/dotnet/core/sdk:3.0 AS build

WORKDIR /app

# copy csproj and restore as distinct layers

COPY *.csproj .

RUN dotnet restore

# copy everything else and build app

COPY . .

RUN dotnet publish -c Release -o /out/

FROM mcr.microsoft.com/dotnet/core/aspnet:3.0 AS runtime

WORKDIR /app

COPY --from=build /out .

ENTRYPOINT ["dotnet", "cross-plat-docker.dll"]

In the main index.cshtml razor page view, I display a simple message to show the OS version and the message received from the other container.

<h1 class="display-4">Welcome from @System.Environment.OSVersion.VersionString</h1>

<p>From @ViewData["Url"]: @ViewData["Message"]</p>

In the code behind, we get the URL to fetch from config, and then call it, adding its response to the ViewData dictionary.

public async Task OnGet()

{

var client = _httpClientFactory.CreateClient();

var url = _config.GetValue("FetchUrl","https://markheath.net/");

ViewData["Url"] = url;

try

{

var message = await client.GetStringAsync(url);

ViewData["Message"] = message.Length > 4000 ? message.Substring(0, 4000) : message;

}

catch (Exception e)

{

_logger.LogError(e, $"couldn't download {url}");

ViewData["Message"] = e.Message;

}

}

This page also has an additional GET endpoint for the other container to call. This uses a routing feature of ASP.NET Core web pages called named handler methods that was new to me. If we create a method on our Razor page called OnGetXYZ, then if we call the page route with the query string ?Handler=XYZ it will get handled by this method, instead of the regular OnGet method.

This allowed me to return some simple JSON.

public async Task<IActionResult> OnGetData()

{

return new JsonResult(new[] { "Hello world",

Environment.OSVersion.VersionString });

}

I've put the whole project up on GitHub if you want to see the code.

Building the containers

To build the Linux container, switch Docker Desktop into Linux mode (you can check it's completed the switch by running docker version), and issue the following command from the folder containing the Dockerfile.

docker image build -t crossplat:linux .

And then to build the Windows container, switch Docker into Windows mode, and issue this command:

docker image build -t crossplat:win .

Running the containers

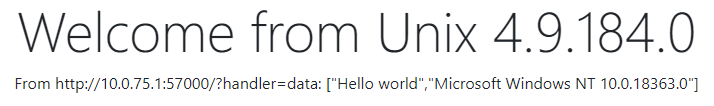

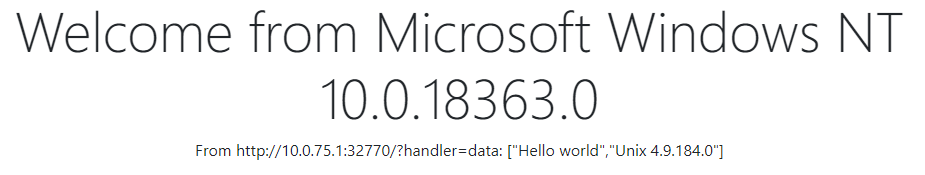

To run the contains, we need to use docker run, and expose a port. I'm setting up the app in the container to listen on port 80, and exposing it as port 57000 for the Windows container and 32770 for the Linux container.

But I'm also using an environment variable to tell each container where to find the other. This raises the question of what IP address should the Linux and Windows containers use in order to communicate with each other.

I tried a few different approaches. localhost doesn't work, and if you try using one of the IP addresses of your machine (as listed by ipconfig) you might be able to find one that works. However, I chose to go for 10.0.75.1. This is a special IP address used by Docker Desktop. This worked for me with both the Windows and Linux containers able to contact each other, but I don't know whether this is the best choice.

With Docker Desktop in Linux mode, I ran the following command to start the Linux container, listening on port 32770 and attempting to fetch data from the Windows container:

docker run -p 32770:80 -d -e ASPNETCORE_URLS="http://+80" `

-e FetchUrl="http://10.0.75.1:57000/?handler=data" crossplat:linux

And with Docker Desktop in Windows mode, I ran the following command to listen on port 57000 and attempting to fetch data from the Linux container.

docker run -p 57000:80 -d -e ASPNETCORE_URLS="http://+80" `

-e FetchUrl="http://10.0.75.1:32770/?handler=data" crossplat:win

Results

Here's the Linux container successfully calling the Windows container:

And here's the Windows Container successfully calling the Linux container:

In this post, we've demonstrated that it's quite possible to simultaneously run Windows and Linux containers on Docker Desktop, and for them to communicate with each other.

Apart from the slightly clunky mode switching that's required, it was easy to do (and that mode switching could well go away in the future thanks to LCOW and WSL2).

What this means is that it's very easy for teams that need to work on a mixture of container types to do so locally, as well as in the cloud.

A place for me to share what I'm learning: Azure, .NET, Docker, audio, microservices, and more...

A place for me to share what I'm learning: Azure, .NET, Docker, audio, microservices, and more...